It is difficult to speak of work analysis without putting it in the perspective of recent changes in the industrial world, because the nature of activities and the conditions in which they are carried out have undergone considerable evolution in recent years. The factors giving rise to these changes have been numerous, but there are two whose impact has proved crucial. On the one hand, technological progress with its ever-quickening pace and the upheavals brought about by information technologies have revolutionized jobs (De Keyser 1986). On the other hand, the uncertainty of the economic market has required more flexibility in personnel management and work organization. If the workers have gained a wider view of the production process that is less routine-oriented and undoubtedly more systematic, they have at the same time lost exclusive links with an environment, a team, a production tool. It is difficult to view these changes with serenity, but we have to face the fact that a new industrial landscape has been created, sometimes more enriching for those workers who can find their place in it, but also filled with pitfalls and worries for those who are marginalized or excluded. However, one idea is being taken up in firms and has been confirmed by pilot experiments in many countries: it should be possible to guide changes and soften their adverse effects with the use of relevant analyses and by using all resources for negotiation between the different work actors. It is within this context that we must place work analyses today—as tools allowing us to describe tasks and activities better in order to guide interventions of different kinds, such as training, the setting up of new organizational modes or the design of tools and work systems. We speak of analyses, and not just one analysis, since there exist a large number of them, depending on the theoretical and cultural contexts in which they are developed, the particular goals they pursue, the evidence they collect, or the analyser’s concern for either specificity or generality. In this article, we will limit ourselves to presenting a few characteristics of work analyses and emphasizing the importance of collective work. Our conclusions will highlight other paths that the limits of this text prevent us from pursuing in greater depth.

Some Characteristics of Work Analyses

The context

If the primary goal of any work analysis is to describe what the operator does, or should do, placing it more precisely into its context has often seemed indispensable to researchers. They mention, according to their own views, but in a broadly similar manner, the concepts of context, situation, environment, work domain, work world or work environment. The problem lies less in the nuances between these terms than in the selection of variables that need to be described in order to give them a useful meaning. Indeed, the world is vast and the industry is complex, and the characteristics that could be referred to are innumerable. Two tendencies can be noted among authors in the field. The first one sees the description of the context as a means of capturing the reader’s interest and providing him or her with an adequate semantic framework. The second has a different theoretical perspective: it attempts to embrace both context and activity, describing only those elements of the context that are capable of influencing the behavior of operators.

The semantic framework

Context has evocative power. It is enough, for an informed reader, to read about an operator in a control room engaged in a continuous process to call up a picture of work through commands and surveillance at a distance, where the tasks of detection, diagnosis, and regulation predominate. What variables need to be described in order to create a sufficiently meaningful context? It all depends on the reader. Nonetheless, there is a consensus in the literature on a few key variables. The nature of the economic sector, the type of production or service, the size and the geographical location of the site are useful.

The production processes, the tools or machines and their level of automation allow certain constraints and certain necessary qualifications to be guessed at. The structure of the personnel, together with age and level of qualification and experience are crucial data whenever the analysis concerns aspects of training or of organizational flexibility. The organization of work established depends more on the firm’s philosophy than on technology. Its description includes, notably, work schedules, the degree of centralization of decisions and the types of control exercised over the workers. Other elements may be added in different cases. They are linked to the firm’s history and culture, its economic situation, work conditions, and any restructuring, mergers, and investments. There exist at least as many systems of classification as there are authors, and there are numerous descriptive lists in circulation. In France, a special effort has been made to generalize simple descriptive methods, notably allowing for the ranking of certain factors according to whether or not they are satisfactory for the operator (RNUR 1976; Guelaud et al. 1977).

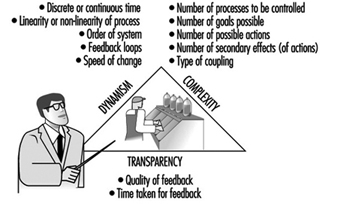

The description of relevant factors regarding the activity

The taxonomy of complex systems described by Rasmussen, Pejtersen, and Schmidts (1990) represents one of the most ambitious attempts to cover at the same time the context and its influence on the operator. Its main idea is to integrate, in a systematic fashion, the different elements of which it is composed and to bring out the degrees of freedom and the constraints within which individual strategies can be developed. Its exhaustive aim makes it difficult to manipulate, but the use of multiple modes of representation, including graphs, to illustrate the constraints has a heuristic value that is bound to be attractive to many readers. Other approaches are more targeted. What the authors seek is the selection of factors that can influence a precise activity. Hence, with an interest in the control of processes in a changing environment, Brehmer (1990) proposes a series of temporal characteristics of the context which affect the control and anticipation of the operator (see figure 1). This author’s typology has been developed from “micro-worlds”, computerized simulations of dynamic situations, but the author himself, along with many others since, used it for the continuous-process industry (Van Daele 1992). For certain activities, the influence of the environment is well known, and the selection of factors is not too difficult. Thus, if we are interested in heart rate in the work environment, we often limit ourselves to describing the air temperatures, the physical constraints of the task or the age and training of the subject—even though we know that by doing so we perhaps leave out relevant elements. For others, the choice is more difficult. Studies on human error, for example, show that the factors capable of producing them are numerous (Reason 1989). Sometimes, when theoretical knowledge is insufficient, only statistical processing, combining context and activity analysis, allows us to bring out the relevant contextual factors (Fadier 1990).

Figure 1. The criteria and sub-criteria of the taxonomy of micro-worlds proposed by Brehmer (1990)

The Task or the Activity?

The task

The task is defined by its objectives, its constraints and the means it requires for achievement. A function within the firm is generally characterized by a set of tasks. The realized task differs from the prescribed task scheduled by the firm for a large number of reasons: the strategies of operators vary within and among individuals, the environment fluctuates and random events require responses that are often outside the prescribed framework. Finally, the task is not always scheduled with the correct knowledge of its conditions of execution, hence the need for adaptations in real-time. But even if the task is updated during the activity, sometimes to the point of being transformed, it still remains the central reference.

Questionnaires, inventories, and taxonomies of tasks are numerous, especially in the English-language literature—the reader will find excellent reviews in Fleishman and Quaintance (1984) and in Greuter and Algera (1989). Certain of these instruments are merely lists of elements—for example, the action verbs to illustrate tasks—that are checked off according to the function studied. Others have adopted a hierarchical principle, characterizing a task as interlocking elements, ordered from the global to the particular. These methods are standardized and can be applied to a large number of functions; they are simple to use, and the analytical stage is much shortened. But where it is a question of defining specific work, they are too static and too general to be useful.

Next, there are those instruments requiring more skill on the part of the researcher; since the elements of analysis are not predefined, it is up to the researcher to characterize them. The already outdated critical incident technique of Flanagan (1954), where the observer describes a function by reference to its difficulties and identifies the incidents which the individual will have to face, belongs to this group.

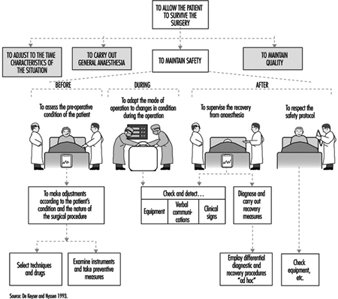

It is also the path adopted by cognitive task analysis (Roth and Woods 1988). This technique aims to bring to light the cognitive requirements of a job. One way to do this is to break the job down into goals, constraints and means. Figure 2 shows how the task of an anesthetist, characterized first by a very global goal of patient survival, can be broken down into a series of sub-goals, which can themselves be classified as actions and means to be employed. More than 100 hours of observation in the operating theatre and subsequent interviews with anesthetists were necessary to obtain this synoptic “photograph” of the requirements of the function. This technique, although quite laborious, is nevertheless useful in ergonomics in determining whether all the goals of a task are provided with the means of attaining them. It also allows for an understanding of the complexity of a task (its particular difficulties and conflicting goals, for example) and facilitates the interpretation of certain human errors. But it suffers, as do other methods, from the absence of a descriptive language (Grant and Mayes 1991). Moreover, it does not permit hypotheses to be formulated as to the nature of the cognitive processes brought into play to attain the goals in question.

Figure 2. Cognitive analysis of the task: general anesthesia

Other approaches have analyzed the cognitive processes associated with given tasks by drawing up hypotheses as to the information processing necessary to accomplish them. A frequently employed cognitive model of this kind is Rasmussen’s (1986), which provides, according to the nature of the task and its familiarity for the subject, three possible levels of activity-based either on skill-based habits and reflexes, on acquired rule-based procedures or on knowledge-based procedures. But other models or theories that reached the height of their popularity during the 1970s remain in use. Hence, the theory of optimal control, which considers man as a controller of discrepancies between assigned and observed goals, is sometimes still applied to cognitive processes. And modeling by means of networks of interconnected tasks and flow charts continues to inspire the authors of cognitive task analysis; figure 3 provides a simplified description of the behavioral sequences in an energy-control task, constructing a hypothesis about certain mental operations. All these attempts reflect the concern of researchers to bring together in the same description not only elements of the context but also the task itself and the cognitive processes that underlie it—and to reflect the dynamic character of work as well.

Figure 3. A simplified description of the determinants of a behavior sequence in energy control tasks: a case of unacceptable consumption of energy

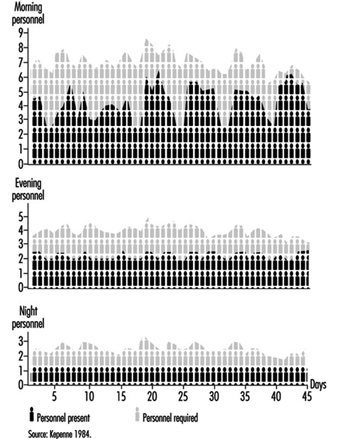

Since the arrival of the scientific organization of work, the concept of the prescribed task has been adversely criticized because it has been viewed as involving the imposition on workers of tasks that are not only designed without consulting their needs but are often accompanied by specific performance time, a restriction not welcomed by many workers. Even if the imposition aspect has become rather more flexible today and even if the workers contribute more often to the design of tasks, an assigned time for tasks remains necessary for schedule planning and remains an essential component of work organization. The quantification of time should not always be perceived in a negative manner. It constitutes a valuable indicator of workload. A simple but common method of measuring the time pressure exerted on a worker consists of determining the quotient of the time necessary for the execution of a task divided by the available time. The closer this quotient is to unity, the greater the pressure (Wickens 1992). Moreover, quantification can be used in flexible but appropriate personnel management. Let us take the case of nurses where the technique of predictive analysis of tasks has been generalized, for example, in the Canadian regulation Planning of Required Nursing (PRN 80) (Kepenne 1984) or one of its European variants. Thanks to such task lists, accompanied by their meantime of execution, one can, each morning, taking into account the number of patients and their medical conditions, establish a care schedule and a distribution of personnel. Far from being a constraint, PRN 80 has, in a number of hospitals, demonstrated that a shortage of nursing personnel exists, since the technique allows a difference to be established (see figure 4) between the desired and the observed, that is, between the number of staff necessary and the number available, and even between the tasks planned and the tasks carried out. The times calculated are only averages, and the fluctuations in the situation do not always make them applicable, but this negative aspect is minimized by a flexible organization that accepts adjustments and allows the personnel to participate in effecting those adjustments.

Figure 4. Discrepancies between the numbers of personnel present and required on the basis of PRN80

The activity, the evidence, and the performance

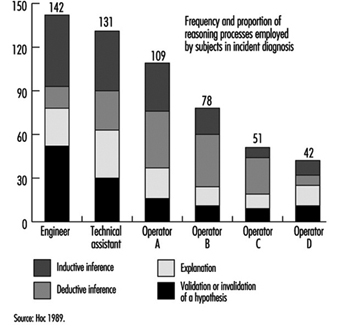

An activity is defined as the set of behaviors and resources used by the operator so that work occurs—that is to say, the transformation or production of goods or the rendering of a service. This activity can be understood through observation in different ways. Faverge (1972) has described four forms of analysis. The first is an analysis in terms of gestures and postures, where the observer locates, within the visible activity of the operator, classes of behavior that are recognizable and repeated during work. These activities are often coupled with a precise response: for example, the heart rate, which allows us to assess the physical load associated with each activity. The second form of analysis is in terms of information uptake. What is discovered, through direct observation—or with the aid of cameras or recorders of eye movements—is the set of signals picked up by the operator in the information field surrounding him or her. This analysis is particularly useful in cognitive ergonomics in trying to better understand the information processing carried out by the operator. The third type of analysis is in terms of regulation. The idea is to identify the adjustments of activity carried out by the operator in order to deal with either fluctuation in the environment or changes in his own condition. There we find the direct intervention of context within the analysis. One of the most frequently cited research projects in this area is that of Sperandio (1972). This author studied the activity of air traffic controllers and identified important strategy changes during an increase in air traffic. He interpreted them as an attempt to simplify the activity by aiming to maintain an acceptable load level, while at the same time continuing to meet the requirements of the task. The fourth is an analysis in terms of thought processes. This type of analysis has been widely used in the ergonomics of highly automated posts. Indeed, the design of computerized aids and notably intelligent aids for the operator requires a thorough understanding of the way in which the operator reasons in order to solve certain problems. The reasoning involved in scheduling, anticipation, and diagnosis has been the subject of analyses, an example of which can be found in figure 5. However, evidence of mental activity can only be inferred. Apart from certain observable aspects of behavior, such as eye movements and problem-solving time, most of these analyses resort to the verbal response. Particular emphasis has been placed, in recent years, on the knowledge necessary to accomplish certain activities, with researchers trying not to postulate them at the outset but to make them apparent through the analysis itself.

Figure 5. Analysis of mental activity. Strategies in the control of processes with long response times: the need for computerized support in diagnosis

Such efforts have brought to light the fact that almost identical performances can be obtained with very different levels of knowledge, as long as operators are aware of their limits and apply strategies adapted to their capabilities. Hence, in our study of the start-up of a thermoelectric plant (De Keyser and Housiaux 1989), the start-ups were carried out by both engineers and operators. The theoretical and procedural knowledge that these two groups possessed, which had been elicited by means of interviews and questionnaires, were very different. The operators in particular sometimes had an erroneous understanding of the variables in the functional links of the process. In spite of this, the performances of the two groups were very close. But the operators took into account more variables in order to verify the control of the start-up and undertook more frequent verifications. Such results were also obtained by Amalberti (1991), who mentioned the existence of metaknowledge allowing experts to manage their own resources.

What evidence of activity is appropriate to elicit? Its nature, as we have seen, depends closely on the form of analysis planned. Its form varies according to the degree of methodological care exercised by the observer. Provoked evidence is distinguished from spontaneous evidence and concomitant from subsequent evidence. Generally speaking, when the nature of the work allows, concomitant and spontaneous evidence are to be preferred. They are free of various drawbacks such as the unreliability of memory, observer interference, the effect of rationalizing reconstruction on the part of the subject, and so forth. To illustrate these distinctions, we will take the example of verbalizations. Spontaneous verbalizations are verbal exchanges, or monologues expressed spontaneously without being requested by the observer; provoked verbalizations are those made at the specific request of the observer, such as the request made to the subject to “think aloud”, which is well known in the cognitive literature. Both types can be done in real-time, during work, and are thus concomitant.

They can also be subsequent, as in interviews, or subjects’ verbalizations when they view videotapes of their work. As for the validity of the verbalizations, the reader should not ignore the doubt raised in this regard by the controversy between Nisbett and De Camp Wilson (1977) and White (1988) and the precautions suggested by numerous authors aware of their importance in the study of mental activity in view of the methodological difficulties encountered (Ericson and Simon 1984; Savoyant and Leplat 1983; Caverni 1988; Bainbridge 1986).

The organization of this evidence, its processing and its formalization require descriptive languages and sometimes analyses that go beyond field observation. Those mental activities which are inferred from the evidence, for example, remain hypothetical. Today they are often described using languages derived from artificial intelligence, making use of representations in terms of schemes, production rules, and connecting networks. Moreover, the use of computerized simulations—of micro-worlds—to pinpoint certain mental activities has become widespread, even though the validity of the results obtained from such computerized simulations, in view of the complexity of the industrial world, is subject to debate. Finally, we must mention the cognitive modelings of certain mental activities extracted from the field. Among the best known is the diagnosis of the operator of a nuclear power plant, carried out in ISPRA (Decortis and Cacciabue 1990), and the planning of the combat pilot perfected in Centre d’études et de recherches de médecine aérospatiale (CERMA) (Amalberti et al. 1989).

Measurement of the discrepancies between the performance of these models and that of real, living operators is a fruitful field in activity analysis. Performance is the outcome of the activity, the final response given by the subject to the requirements of the task. It is expressed at the level of production: productivity, quality, error, incident, accident—and even, at a more global level, absenteeism or turnover. But it must also be identified at the individual level: the subjective expression of satisfaction, stress, fatigue or workload, and many physiological responses are also performance indicators. Only the entire set of data permits interpretation of the activity—that is to say, judging whether or not it furthers the desired goals while remaining within human limits. There exists a set of norms which, up to a certain point, guide the observer. But these norms are not situated—they do not take into account the context, its fluctuations and the condition of the worker. This is why in design ergonomics, even when rules, norms, and models exist, designers are advised to test the product using prototypes as early as possible and to evaluate the users’ activity and performance.

Individual or Collective Work?

While in the vast majority of cases, work is a collective act, most work analyses focus on tasks or individual activities. Nonetheless, the fact is that technological evolution, just like work organization, today emphasizes distributed work, whether it be between workers and machines or simply within a group. What paths have been explored by authors so as to take this distribution into account (Rasmussen, Pejtersen and Schmidts 1990)? They focus on three aspects: structure, the nature of exchanges and structural lability.

Structure

Whether we view structure as elements of the analysis of people, or of services, or even of different branches of a firm working in a network, the description of the links that unite them remains a problem. We are very familiar with the organigrams within firms that indicate the structure of authority and whose various forms reflect the organizational philosophy of the firm—very hierarchically organized for a Taylor-like structure, or flattened like a rake, even matrix-like, for a more flexible structure. Other descriptions of distributed activities are possible: an example is given in figure 6. More recently, the need for firms to represent their information exchanges at a global level has led to a rethinking of information systems. Thanks to certain descriptive languages—for example, design schemas, or entity-relations-attribute matrixes—the structure of relations at the collective level can today be described in a very abstract manner and can serve as a springboard for the creation of computerized management systems.

Figure 6. Integrated life cycle design

The nature of exchanges

Simply having a description of the links uniting the entities says little about the content itself of the exchanges; of course the nature of the relation can be specified—movement from place to place, information transfers, hierarchical dependence, and so on—but this is often quite inadequate. The analysis of communications within teams has become a favored means of capturing the very nature of collective work, encompassing subjects mentioned, creation of a common language in a team, modification of communications when circumstances are critical, and so forth (Tardieu, Nanci and Pascot 1985; Rolland 1986; Navarro 1990; Van Daele 1992; Lacoste 1983; Moray, Sanderson and Vincente 1989). Knowledge of these interactions is particularly useful for the creation of computer tools, notably decision-making aids for understanding errors. The different stages and the methodological difficulties linked to the use of this evidence have been well described by Falzon (1991).

Structural lability

It is the work on activities rather than on tasks that have opened up the field of structural lability—that is to say, of the constant reconfigurations of collective work under the influence of contextual factors. Studies such as those of Rogalski (1991), who over a long period analyzed the collective activities dealing with forest fires in France, and Bourdon and Weill Fassina (1994), who studied the organizational structure set up to deal with railway accidents, are both very informative. They clearly show how the context molds the structure of exchanges, the number, and type of actors involved, the nature of the communications and the number of parameters essential to the work. The more this context fluctuates, the further the fixed descriptions of the task are removed from reality. Knowledge of this lability, and a better understanding of the phenomena that take place within it, are essential in planning for the unpredictable and in order to provide better training for those involved in collective work in a crisis.

Conclusions

The various phases of the work analysis that have been described are an iterative part of any human factors design cycle (see figure 6). In this design of any technical object, whether a tool, a workstation or a factory, in which human factors are a consideration, certain information is needed in time. In general, the beginning of the design cycle is characterized by a need for data involving environmental constraints, the types of jobs that are to be carried out, and the various characteristics of the users. This initial information allows the specifications of the object to be drawn up so as to take into account work requirements. But this is, in some sense, only a coarse model compared to the real work situation. This explains why models and prototypes are necessary that, from their inception, allow not the jobs themselves, but the activities of the future users to be evaluated. Consequently, while the design of the images on a monitor in a control room can be based on a thorough cognitive analysis of the job to be done, only a data-based analysis of the activity will allow an accurate determination of whether the prototype will actually be of use in the actual work situation (Van Daele 1988). Once the finished technical object is put into operation, greater emphasis is put on the performance of the users and on dysfunctional situations, such as accidents or human error. The gathering of this type of information allows the final corrections to be made that will increase the reliability and usability of the completed object. Both the nuclear industry and the aeronautics industry serve as an example: operational feedback involves reporting every incident that occurs. In this way, the design loop comes full circle.